AI Voice Cloning for Micro-Dramas: Localize Content Across 10+ Languages Fast

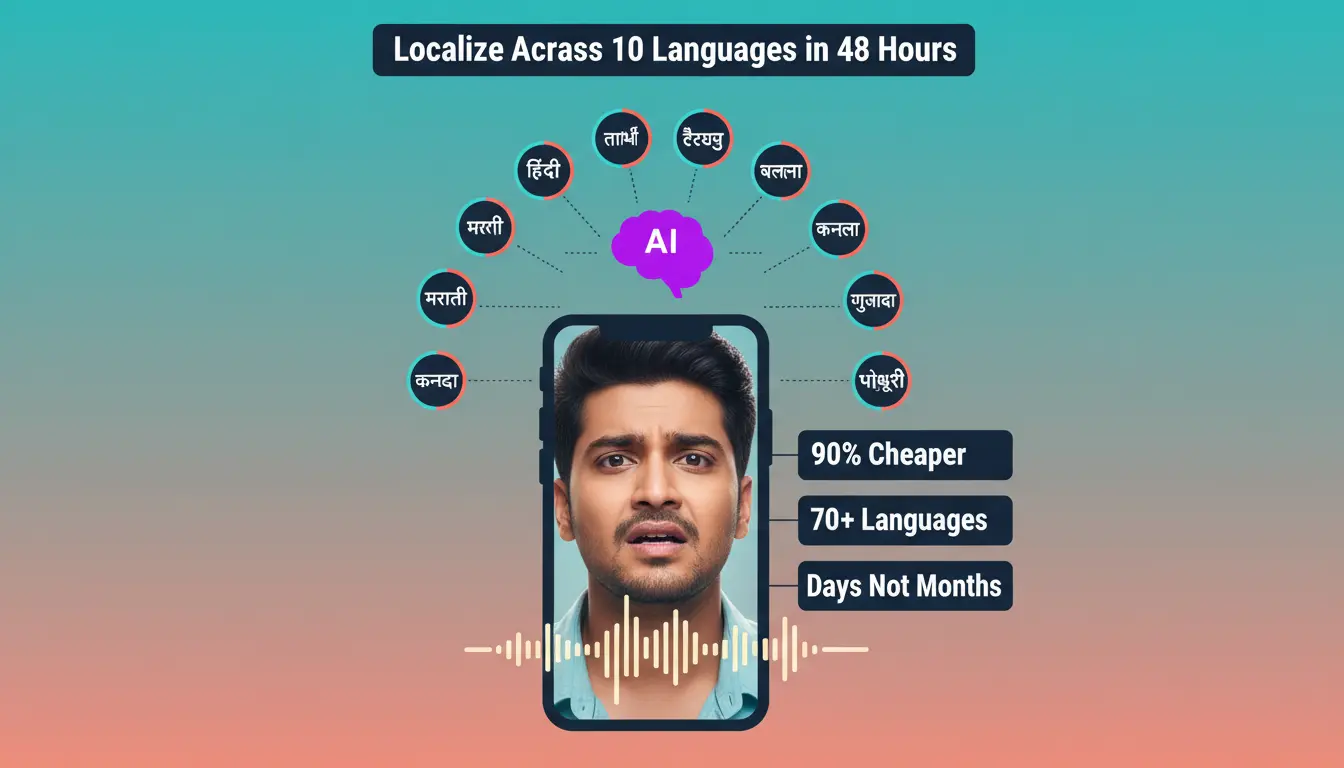

AI voice cloning technology enables micro-drama producers to localize content across 10+ regional languages in days instead of months, cutting dubbing costs by up to 90% while preserving the original speaker’s tone, emotional delivery, and personality. This breakthrough transforms Indian content economics, where production houses can now serve Hindi, Tamil, Telugu, Bengali, Marathi, Malayalam, Kannada, Gujarati, Punjabi, and Bhojpuri audiences simultaneously without prohibitive studio costs or quality compromises. With India’s vernacular content consumption driving 60-70% of the ₹4,000 crore micro-drama market, voice cloning represents the critical technology enabling producers to capture regional opportunities at scale.

How AI Voice Cloning Technology Works

AI dubbing combines speech recognition, neural machine translation, and voice synthesis to create authentic dialogue tracks in multiple languages while maintaining the speaker’s natural vocal characteristics. The process begins with automatic speech recognition (ASR) capturing every word, timestamp, pause, and tonal variation from the original recording. Professional linguists then review transcripts to correct errors before neural machine translation generates initial translations, which cultural experts refine to preserve intent, timing, and regional relevance.

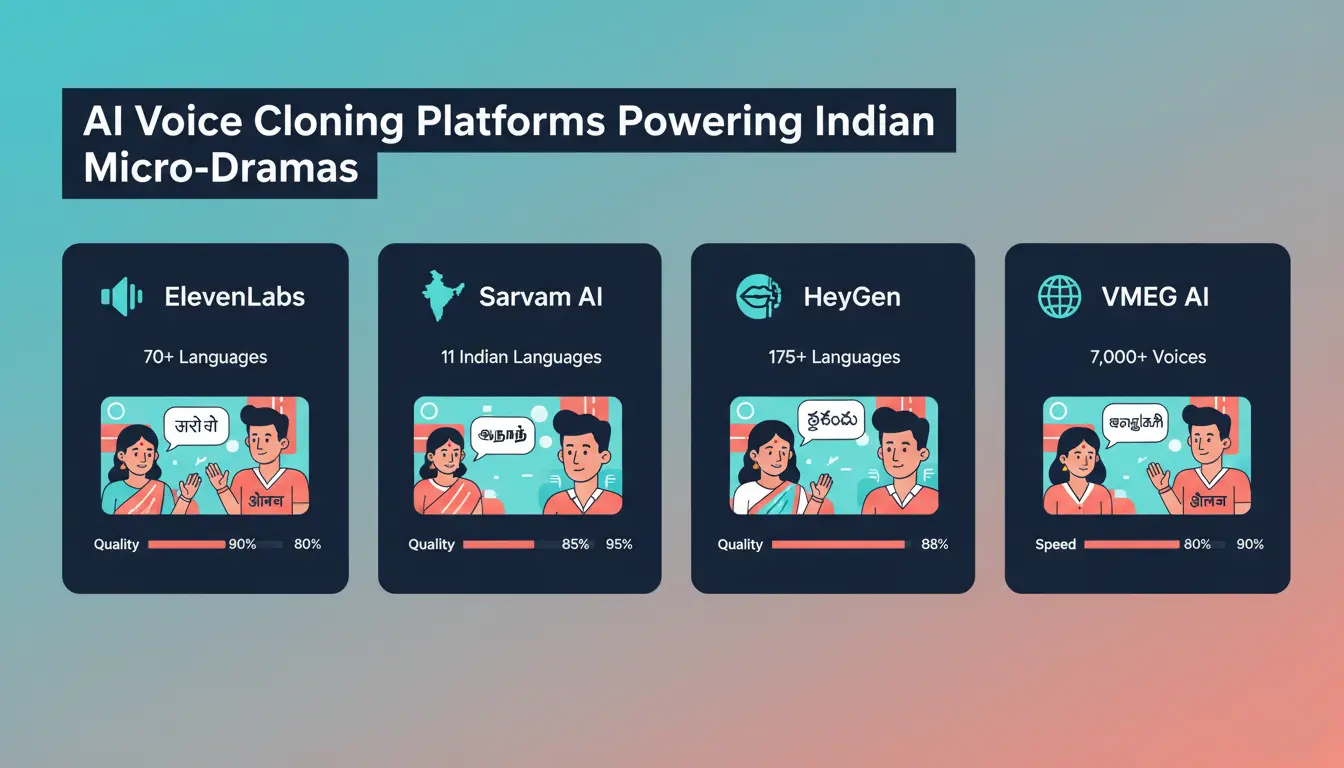

The breakthrough comes in voice cloning: AI analyzes pitch, pace, inflection, and emotional patterns to recreate the speaker’s distinctive sound in new languages. Leading platforms like ElevenLabs support 70+ languages with 5,000+ voice options, while India-focused Sarvam AI powers high-fidelity dubbing across 11 Indian languages. This technology enables the same actor to deliver authentic performances in Tamil, Telugu, and Hindi without re-recording, maintaining character consistency across regional micro-drama distributions.

Leading AI Voice Cloning Platforms for 2026

ElevenLabs: Offers multilingual voice cloning across 70+ languages with ultra-realistic speech synthesis capable of conveying intent and emotions hyper-realistically. The platform identifies multilingual text automatically and articulates it appropriately, adjusting delivery based on context. ElevenLabs became the go-to choice for creators needing natural-sounding dubbing that maintains vocal personality across language barriers.

Sarvam AI Studio: Launched specifically for Indian content creators, Sarvam Studio generates high-fidelity dubs in 11 Indian languages including Hindi, Tamil, Telugu, Bengali, Marathi, Gujarati, Kannada, Malayalam, Punjabi, Odia, and Assamese. Independent expert studies show participants prefer Sarvam Studio for overall quality and production readiness, with the platform’s Bulbul V3 text-to-speech model achieving highest listener preference and lowest error rates across Indian languages.

HeyGen: Translates video content across 175+ languages while preserving speaker identity through AI-cloned voices with unique tone and delivery. The platform’s text-to-video editor generates AI voices, applies lip sync, and aligns visuals automatically without manual audio editing, enabling same-day localization for viral micro-drama content. VMEG AI: Comprehensive video localization platform supporting 170+ languages with 7,000+ realistic voices featuring emotional tone, regional accents, and personality variations. The platform combines voice cloning with lip-sync technology that aligns translated audio with on-screen speakers for natural mouth movement in real-time.

The Economics: 90% Cost Reduction

Traditional dubbing involves layered expenses—voice talent, studio hire, direction, editing, and project management—with each language requiring separate production cycles. AI voice cloning eliminates most repetition, enabling the same content to localize into dozens of languages at a fraction of traditional costs. Industry research shows AI dubbing reduces costs by up to 90% while compressing production timelines from months to days.

A 60-minute training video that might cost tens of thousands to localize traditionally can now be ready in 10+ languages for a fraction of that investment. This efficiency proves transformative for producers creating regional language micro-dramas, where budgets already run 70-90% lower than conventional web series. The cost savings enable portfolio approaches where production houses test multiple regional markets simultaneously without catastrophic financial exposure.

Speed to Market: Days Instead of Months

AI-powered workflows run transcription, translation, and voice generation in parallel, collapsing traditional linear production timelines. What once required six weeks of sequential studio sessions now completes in 48-72 hours, enabling simultaneous launches across all regional markets. This velocity proves critical for micro-drama producers capitalizing on trending topics or seasonal content where timing determines commercial success.

The “Kalaa Setu” framework developed for Indian content creators recommends phased expansion: start with Hindi plus one South Indian language (Tamil or Telugu) in the first 90 days, scale to the “Big Five” (adding Bengali and Malayalam) by 180 days, then expand to the “Bharat 10” including Marathi, Kannada, Gujarati, and Punjabi within 12 months. This structured approach builds operational muscle while perfecting quality controls, with automated QA checks ensuring linguistic accuracy and cultural appropriateness.

Maintaining Quality Through Human-in-the-Loop

Pure automation risks missing cultural nuances, emotional subtleties, and contextual appropriateness that distinguish effective localization from technically accurate translation. The most successful workflows combine AI speed with human expertise through three critical checkpoints: script review by professional linguists approving final translations, cultural review by native experts ensuring tone and references land appropriately, and quality review where editors sign off on final mixing and timing.

This “Genuine Intelligence” approach prevents the uncanny valley effect where technically correct dubbing feels emotionally flat or culturally tone-deaf. For micro-drama hooks where the first three seconds determine viewer retention, emotional authenticity separates scroll-stopping content from algorithmic obscurity. Human oversight ensures voice cloning preserves not just words but the psychological triggers—shock, curiosity, emotional resonance—that drive engagement.

Lip-Sync Technology for Visual Authenticity

Advanced AI platforms now include lip-sync algorithms that align translated audio with speaker mouth movements, maintaining visual coherence essential for immersive viewing. VMEG AI and HeyGen lead this capability, using real-time processing to adjust facial movements matching new dialogue cadences. This technology proves particularly valuable for casting relatable faces where close-up emotional expressions drive micro-drama engagement.

Even slight timing mismatches create the uncanny valley effect that shatters viewer immersion, transforming attention from content to technical glitches. Professional-grade lip-sync prevents this distraction, enabling audiences to focus on storytelling rather than production artifacts. For vertical video formats where faces occupy the entire mobile screen, this synchronization becomes non-negotiable for maintaining production credibility.

Implementation Strategy for Production Houses

Start with high-performing content where localization ROI proves easiest to measure—top 10 videos by viewership make ideal pilot projects. Focus initial localization efforts on two languages (Hindi plus one regional option) to perfect glossary accuracy, voice consistency, and cultural adaptation before scaling. Target lip-sync quality scores above 0.92 and reduce cycle time per language to under 24 hours through iterative workflow optimization.

Integrate AI voice cloning with existing text-based editing workflows to maximize efficiency gains, as both technologies share cloud-based collaboration advantages and automated processing pipelines. Production houses already using Descript or similar platforms can add multilingual capabilities without rebuilding entire post-production infrastructure. This layered approach to technology adoption spreads implementation risk while compounding efficiency improvements.

Ethical Considerations and Consent Frameworks

Voice cloning requires explicit, informed consent from performers clearly specifying usage scope, territories, duration, and revocation rights. Industry unions like SAG-AFTRA establish standards requiring contracts to define exact use cases (internal training versus public advertising), geographic licensing, term limits, and fair compensation models including residuals for ongoing digital voice usage. Organizations must implement robust security protocols including end-to-end encryption, strict access controls, and clear audit trails demonstrating proper consent documentation.

Audio watermarking provides forensic traceability, embedding invisible digital signatures that prove content authenticity while preventing deepfake misuse. For brand co-production deals involving celebrity voices or branded content formats, these ethical frameworks protect both talent and producers from reputation risks. Transparent governance policies transform voice cloning from legal liability into sustainable competitive advantage.

The Multi-Language Content Advantage

Over 700 million non-English speaking internet users will be active across India by 2026, with vernacular content consumption accounting for over 80% of engagement in Tier-2/3 markets. Production houses localizing content across 10+ regional languages access cumulative audiences 5-10X larger than English or Hindi-only strategies. EY-FICCI reports show Indian media growth heavily skewed toward regional digital content, with AI-driven localization shortening production timelines by up to 80%.

YouTube’s single-channel multi-audio model enables uploading multiple language tracks to one video, with algorithms automatically serving appropriate versions based on viewer language preferences. This distribution strategy maintains SEO authority and subscriber consolidation while serving personalized experiences, dramatically outperforming separate regional channels that fragment audiences and dilute algorithmic signals. For micro-drama series where serialized engagement drives retention, unified channels with multilingual audio maximize binge behavior and playlist completion rates.

AI voice cloning democratizes global content production, transforming expensive, time-intensive localization into fast, affordable workflows that enable even small production houses to compete across India’s entire linguistic landscape with authentic, culturally resonant storytelling.

FAQ’S

Q1: How accurate is AI voice cloning for regional Indian languages?

Leading platforms like Sarvam AI achieve expert-preferred quality across 11 Indian languages, with Bulbul V3 delivering highest listener preference and lowest error rates in third-party testing.

Q2: How much does AI voice cloning cost compared to traditional dubbing?

AI dubbing reduces costs by up to 90%, turning projects that cost tens of thousands traditionally into thousands, while cutting timelines from months to 48-72 hours.

Q3: Can AI voice cloning maintain emotional delivery across languages?

Advanced platforms preserve pitch, pace, inflection, and emotional patterns, though human-in-the-loop review ensures cultural nuances and authentic expression land correctly in each language.

Q4: Which languages should Indian micro-drama producers prioritize first?

Start with Hindi plus one South Indian language (Tamil or Telugu), then scale to the “Big Five” (adding Bengali and Malayalam) before expanding to 10+ regional markets.

Q5: Does AI voice cloning require consent from original speakers?

Yes—explicit, informed consent specifying usage scope, territories, duration, and compensation is legally required and ethically essential for voice cloning implementation.