AI-Native Cinematography: How New Camera Language Is Changing Visual Storytelling

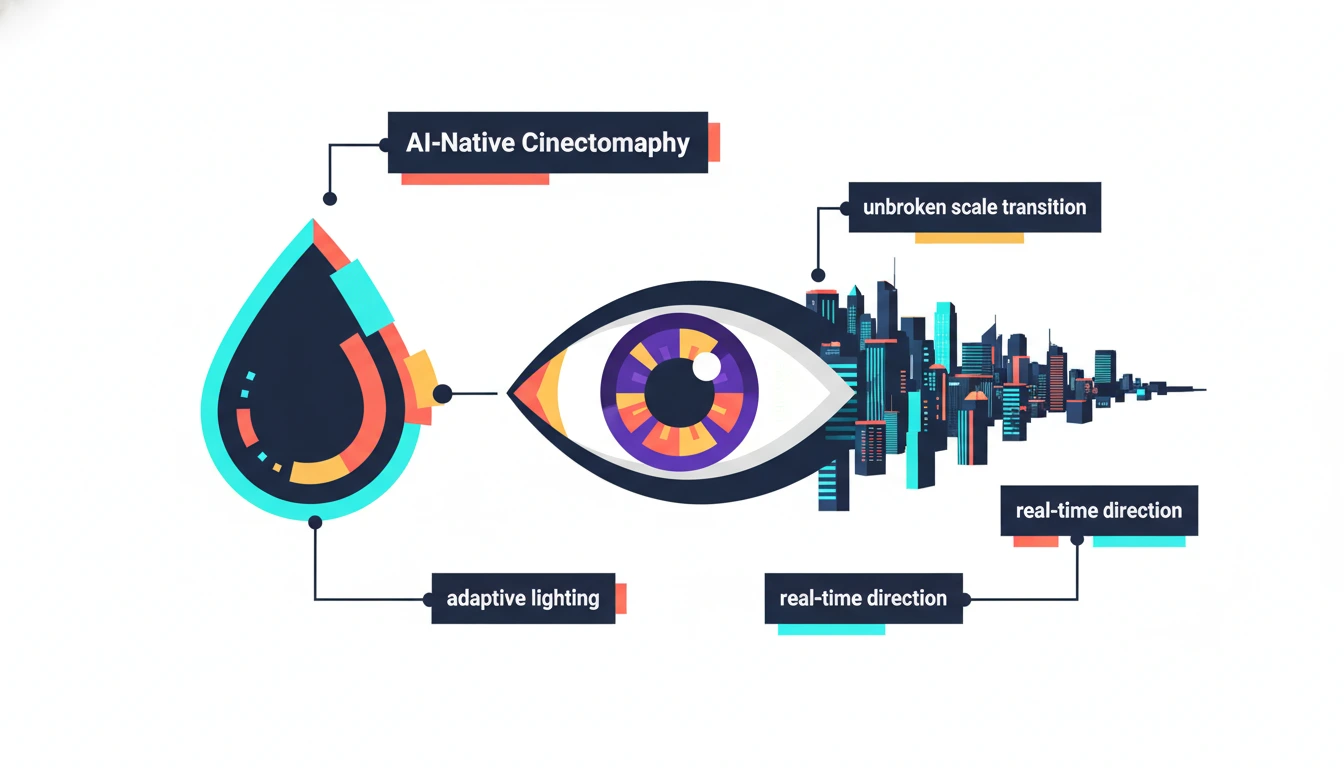

AI video generation has crossed a decisive threshold in 2026 — it no longer mimics traditional filmmaking, it is developing its own cinematic language. Camera movements impossible to execute physically, lighting that shifts dynamically with emotional states, and algorithmically optimized pacing tuned to viewer attention in real time are emerging as the defining characteristics of AI-native visual storytelling. For Indian production houses building micro-drama content at scale, understanding this new grammar isn’t optional — it’s the competitive edge that separates forgettable content from franchise-worthy IP.

From Replication to Invention

Until recently, AI video tools functioned as digital impersonators — replicating traditional cinematography rules, fixed camera grammar, and human-style editing. That phase is over. Tools like Veo 3.1, Sora 2 Pro, and Kling 3.0 now respond to actual director vocabulary: dolly moves, rack focus, Dutch angles, crane reveals — executing with cinematographic understanding rather than pattern matching.

Directors describe blocking, camera movement, and emotional beats in prompts, and AI executes sequences that would have required weeks of pre-production planning. Actor Rana Daggubati articulated this shift precisely: “AI now allows creators to almost watch their movies before a single frame is shot”. The implication for Indian filmmakers is profound — pre-visualization, location scouting, storyboarding, and casting previews now collapse into a single AI-assisted session.

The Birth of AI-Native Camera Grammar

Traditional cinematography is constrained by physics — cameras have weight, lenses have focal limits, cranes have reach. AI has none of these limitations, and its emerging visual grammar reflects this freedom.

Unbroken Scale Transitions: AI can seamlessly merge macro and landscape scales within a single continuous shot — moving from an extreme close-up of a teardrop to a wide aerial cityscape without cuts. No crane, drone, or optical system can achieve this.

Emotionally Adaptive Lighting: Rather than fixed lighting setups, AI generates color and light that shifts dynamically mirroring a character’s internal state mid-scene — warm amber dissolving to cold blue as hope turns to despair.

Real-Time Direction: By late 2026, platforms like Higgsfield are enabling creators to manipulate virtual cameras and adjust character expressions while the AI regenerates video streams instantly — turning generation into live performance rather than static prompt submission. This transforms the director’s workflow from writing instructions to conducting a scene.

Algorithmic Pacing: AI analyzes viewer attention patterns to optimize cut timing, motion speed, and visual density in real time — producing content that holds attention not through intuition but through behavioral data.

What This Means for Micro-Drama Production

For production houses building hook-driven episodes where the first 3 seconds determine retention, AI-native cinematography delivers three decisive advantages:

Speed: AI-generated establishing shots, atmospheric B-roll, and transition sequences eliminate location shoots entirely for non-dialogue content — compressing pre-production from weeks to hours.

Scale: Studios in Mumbai are producing 550+ titles currently using AI-assisted workflows. AI-native tools make this volume possible without proportional crew increases.

Experimentation: Testing five visual styles, three color grades, and two camera approaches for a single episode costs nothing with AI — enabling data-driven creative decisions before committing to full production.

Post-production efficiency compounds these gains by automating editing once the visual direction is locked.

The Ethical and Authorship Debate

AI-native cinematography raises critical questions the industry is actively wrestling with. 76% of respondents in a recent survey believe AI-generated content should not be classified as art, while 45.6% of artists believe AI tools will dramatically improve creative practice. These positions coexist because AI functions best as amplifier rather than author — the cinematic vision still originates with the human director, while AI handles physical execution.

Forbes’ analysis confirms AI is moving audience data upstream — reshaping not just how films are made but how stories are imagined, financed, and greenlit, with predictive modeling influencing creative decisions from concept stage. The directors who thrive will be those who master AI as a creative instrument rather than a production shortcut — using its native language to tell stories impossible any other way.

FAQ’S

Q1: What is AI-native cinematography?

A new visual grammar where AI generates camera movements, lighting shifts, and pacing impossible in physical filmmaking — unconstrained by lens, gravity, or equipment.

Q2: How is AI changing pre-production for Indian filmmakers?

Storyboarding, moodboarding, location previews, and camera angles now generate instantly via AI prompts, replacing weeks of static pre-visualization.

Q3: Can AI match professional cinematography quality in 2026?

In many applications, yes — tools like Veo 3.1 and Sora 2 Pro execute dolly moves, crane reveals, and handheld sequences with cinematographic understanding.

Q4: What makes AI-native camera language different from traditional filmmaking?

Unbroken scale transitions, emotionally adaptive lighting, and real-time direction override physical constraints — creating movements no camera crew could execute manually.

Q5: Does using AI cinematography reduce creative control for directors?

No — directors describe blocking, movement, and emotional beats in prompts; AI executes the vision, amplifying creative output rather than replacing directorial judgment.