AI-Integrated Post-Production: Why Editing, VFX, and Continuity Fixes Are Merging into One Workflow

Post-production is no longer a linear handoff from editor to VFX artist to finishing team; AI is turning it into one connected workflow where creative and technical fixes happen in parallel. Studios are increasingly treating editing, clean-up, continuity correction, and visual enhancement as part of the same post pipeline because AI tools can now automate or accelerate tasks that used to be split across different departments and timelines.

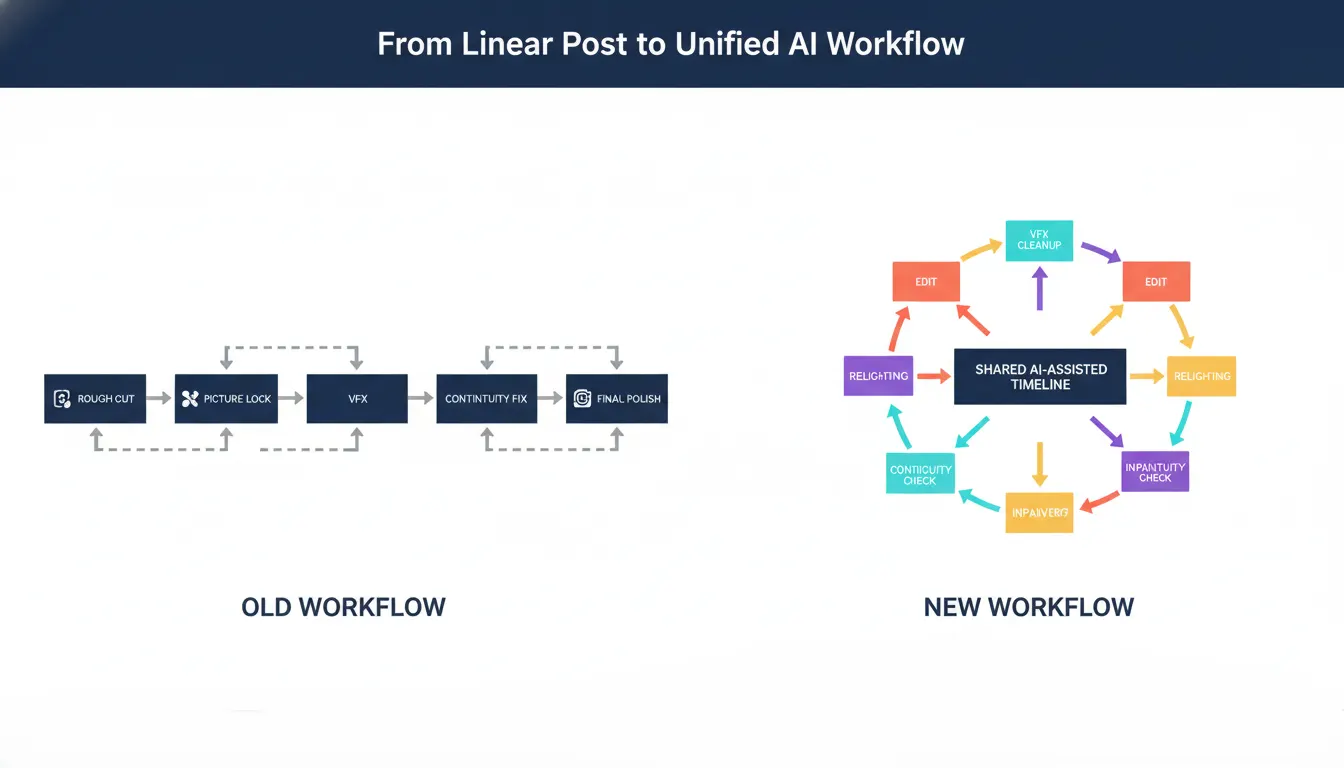

Why the old workflow is breaking

Traditional post-production relied on rigid sequencing: first rough cut, then picture lock, then VFX, then final polish. That model made sense when every correction required manual labor and each department needed fixed inputs before starting work. AI changes that because editors can now test pacing, cleanup, performance tweaks, and even visual fixes much earlier, without waiting for the entire cut to be locked. The result is a faster continuum where decisions move together instead of one after another.

Editing is now the control center

Text-based editing is a big reason for this shift. Editors can work directly from transcripts, remove filler, rearrange scenes, and tighten pacing without scrubbing endlessly across timelines, which reduces revision cycles and speeds up collaboration. But the bigger change is that the edit timeline is becoming the place where VFX-adjacent choices also begin: background cleanup, reframing, shot replacement, and continuity checks can now be triggered much earlier in the process. That is why modern post workflows increasingly start from AI automation rather than from manual rough cutting alone.

VFX and cleanup are moving upstream

AI tools are already being used for rotoscoping, clean plate creation, matte extraction, relighting, object removal, and set extension prototyping, all of which used to sit deeper in the VFX pipeline. With node-based systems and generative inpainting, artists can remove distractions, patch backgrounds, create sky replacements, and generate depth maps much faster than before. That means continuity fixes no longer need to wait until late finishing; they can be solved while the cut is still evolving. In practical terms, a bad prop mismatch, inconsistent lighting, or distracting object is now treated as a live edit problem, not a downstream emergency.

Continuity is becoming data-driven

Continuity used to depend heavily on script supervisors, sharp eyes, and painful manual review. AI-assisted workflows now improve that process by making it easier to compare takes, align scene variations, and preserve character, lighting, and brand consistency across edits. This matters even more in high-volume formats where small mismatches multiply across many episodes. Studios building serialized content can use modular production pipelines and AI-assisted post together, so continuity becomes part of the system instead of a last-minute repair job.

Why this matters for studios in 2026

The biggest gain is not just speed; it is coordination. When editing, VFX, and continuity operate inside one AI-assisted loop, studios reduce handoff delays, shorten approval cycles, and keep more creative control over the final cut. Small teams benefit because they can achieve results that previously needed several specialists, while larger studios benefit by moving high-volume content faster without losing consistency. For production houses handling short-form series, branded videos, or AI-heavy projects, unified post is becoming the new baseline, especially when paired with traditional production skills that keep storytelling, taste, and judgment at the center.

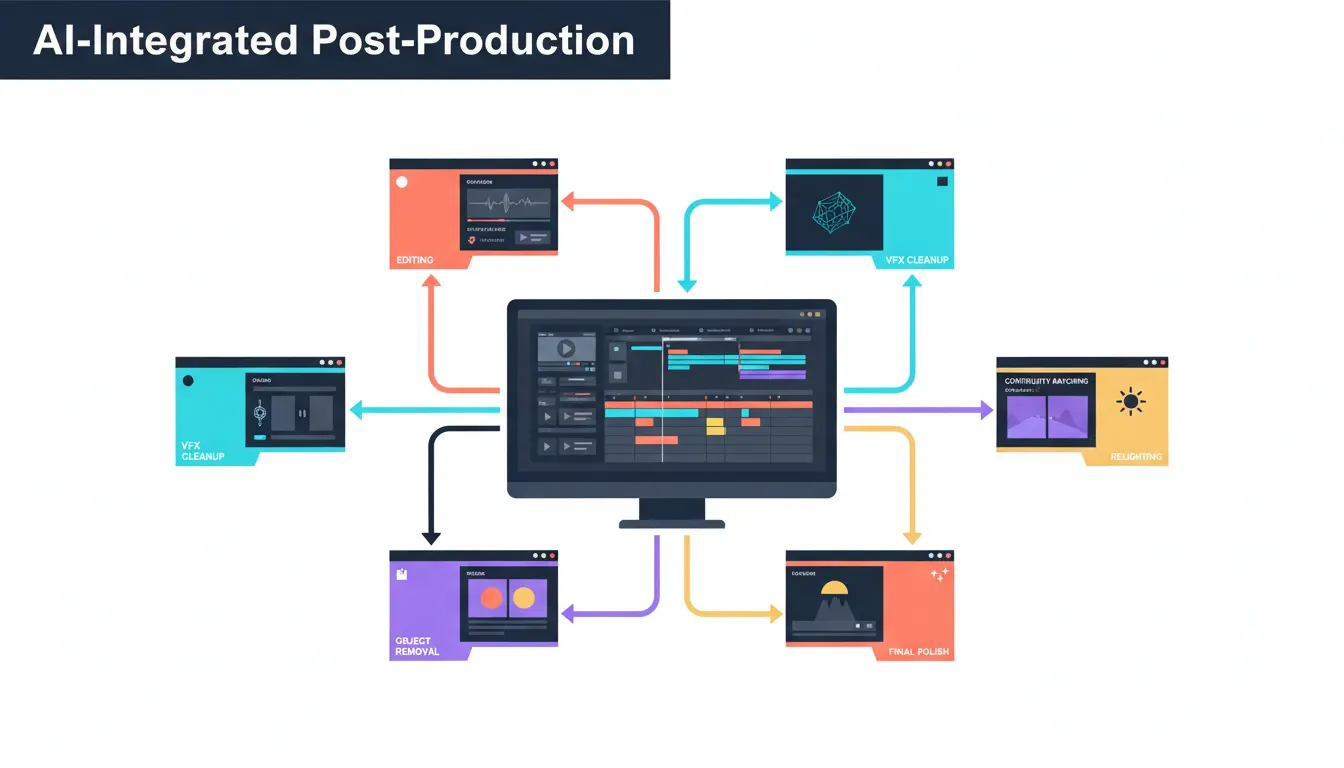

What a unified workflow looks like

A practical AI-integrated post workflow usually works like this:

- Edit from transcript or scene text to create a rough cut faster.

- Flag continuity issues, unwanted objects, or environmental inconsistencies during the edit itself.

- Run cleanup, relighting, rotoscoping, or inpainting without waiting for full picture lock.

- Review pacing, polish visuals, and prepare platform-specific outputs in parallel instead of in separate stages.

- Finalize with human creative judgment so the work still feels intentional, not automated.

Post-production is merging because AI removes the old walls between departments. The studios that adapt first will not just finish faster; they will build a more flexible, more resilient, and more scalable creative workflow for everything that comes next.

FAQ’S

Q1: What is AI-integrated post-production?

It is a workflow where editing, VFX, cleanup, and continuity fixes happen together instead of as separate late-stage steps.

Q2: Why are editing and VFX merging now?

Because AI tools can automate cleanup, relighting, masking, and revisions directly inside or alongside the edit process.

Q3: How does AI help with continuity fixes?

It helps compare takes, preserve visual consistency, and catch problems earlier so they can be corrected before final finishing.

Q4: Does AI replace post-production teams?

No, it reduces repetitive tasks and speeds execution, while human teams still guide taste, story, and final judgment.

Q5: Who benefits most from unified AI post workflows?

Studios producing high volumes of short-form, branded, or serialized content benefit the most because speed and consistency matter more at scale.